In the process of creating the initial backup of a large (1TB+) repository, I noticed that whenever I interrupted (i.e. stopped) the backup process, resuming the backup afterwards takes increasingly long time. From what I understand, this is because duplicacy has no index of all previously uploaded files and therefore has to “discover” what chunks already exist, until it finds some that it actually still needs to upload.

But I wonder whether it is correct that this literally takes hours (almost 6 at this point, and still counting). If this is by design, I wonder if there is not room for improvement here (though I have no suggestion as to how).

If this is not by design, I wonder what might be causing this. My only guess, at the moment, is that it has something to do that I tried resuming the backup not via the GUI but via the CLI instead (it was initiated, stopped, and resumed via the GUI several times before without problems, except for the increasingly long waiting time). Are there any pitfalls when switching between CLI and GUI?

My hunch is that this long waiting time is by design after all (my backup archive has now exceeds 900 GB in size). My main reason for believing this is that duplicacy is still actively reading files from the repository, according to the windows task manager.

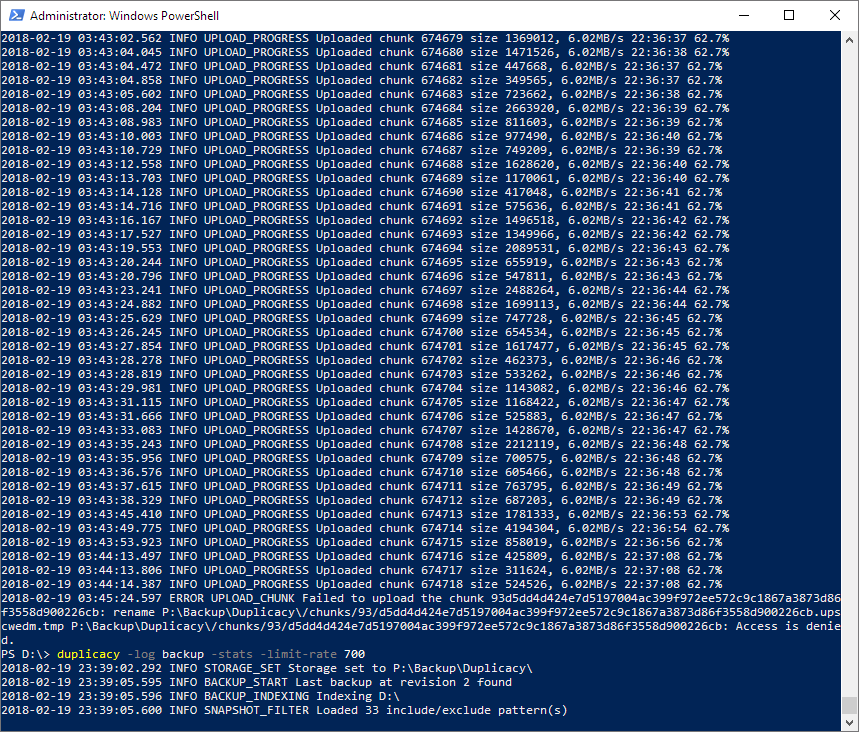

My main reason for doubting that everything is in order is that my understanding was that whenever duplicacy is skipping chunks to upload, it says so in the backup -stats. But so far, the only output the job produced is this:

Storage set to P:\Backup\duplicacy

No previous backup found

Creating a shadow copy for D:\

Shadow copy {E8237DE6-EC1B-4E41-BF96-660929B660BC} created

Indexing D:\christoph

Loaded 21 include/exclude pattern(s)

In contrast, a different job a couple of days ago produced this when resumed:

Storage set to P:\Backup\duplicacy

No previous backup found

Indexing T:\

Incomplete snapshot loaded from c:\duplicacy\prefs/incomplete

Listing all chunks

Skipped 2299 files from previous incomplete backup

Skipped chunk 1 size 8427437, 8.04MB/s 00:24:38 0.0%

Skipped chunk 2 size 2007431, 9.95MB/s 00:19:54 0.0%

Skipped chunk 3 size 6222934, 15.89MB/s 00:12:28 0.1%

Skipped chunk 4 size 2979547, 18.73MB/s 00:10:34 0.1%

Skipped chunk 5 size 1059019, 19.74MB/s 00:10:02 0.1%

Skipped chunk 6 size 8930050, 28.25MB/s 00:07:00 0.2%

Skipped chunk 7 size 10986522, 38.73MB/s 00:05:06 0.3%

Skipped chunk 8 size 1392134, 40.06MB/s 00:04:56 0.3%

Skipped chunk 9 size 2115144, 42.08MB/s 00:04:42 0.3%

...

The difference is that the latter job never had anything to do with the GUI. And there is no -vss involved, because the repository is on a samba share (accessed from the windows pc).

So this is why I’m confused…