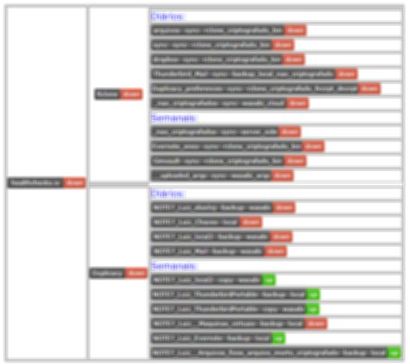

@TheBestPessimist is using healthchecks.io to monitor backup status: Scripts and utilities index.

I thought I’d show some examples in case you want to do this in your scripts. I use the CLI (command line) version, and Windows 10 and Windows 7 as backup clients.

https://healthchecks.io is a service which allows setting up “checks”. If a check does not receive a signal for a reasonable time (expected period + grace period), you can have a mail sent to you. You can send a failure signal as well, to avoid waiting for the grace period. Signal can be sent to the service by mail or a HTTP request. The service is free for limited personal use. You can monitor 20 checks, with limited logging (100 last events)

The beauty of this kind of service is that although it might not be 100% fail proof, it is independent of your computer which could crash or be switched off.

To avoid false positives you must select your period and grace settings wisely, especially if the computer is seldom used. (You can use the “Start” feature for this)

To test these examples, first register on the service. Set up a check and copy the URL for it. This URL you can paste into the command examples wherever I write https://hc-ping.com/your-check-url

Since I am a Windows/CLI user, I will show some example for the Windows environment. I use powershell and bat files for these examples, but the object system.net.webclient can be used in some other languages as well.

The first simple example requires Windows 10 or a newer version of powersehll (v3) (See below for Windows 7 and older PS)

The powershell command is

Invoke-RestMethod 'https://hc-ping.com/your-check-url'

You can execute this from a bat-file like this:

powershell.exe -command "&{Invoke-RestMethod 'https://hc-ping.com/your-check-url'}"

This sends a signal to the service saying that this check is OK. You probably only want to do this when a backup has been run successfully!

I recommend a scheduled job which performs a backup, logs result locally, and (if successful) reports status to healthchecks.io

Let’s say you have set up a Duplicacy repository at X:\YourRepositoryFolder, and installed the CLI files to C:\Program Files\Duplicacy. Create a batch file somewhere it cannot be modified without being administrator (e.g. C:\Program Files\Duplicacy) and schedule a task to run it for instance once a day.

A batch file in Windows is a text file with the file extension .cmd or .bat.

(.cmd looks more modern)

CD /D X:\YourRepositoryFolder

"C:\Program Files\Duplicacy\duplicacy.exe" backup

If not Errorlevel 1 powershell.exe -command "&{Invoke-RestMethod 'https://hc-ping.com/your-check-url'}"

The /D option for the CD command selects the the X: drive and then changes the current folder to the path specified. This allows Duplicacy to find the repository.

The If Errorlevel command is true if the exit code of the previous command is equal or higher than the number provided after “Errorlevel”.

“If not Errorlevel 1” is true if the exit code of the previous command is 0 (success)

( If Errorlevel 0 is always true so can not be used here. )

Remember, this is just an example. You can add options from the Duplicacy user guide to show more details, and pipe log results to a log file or other things that you want.

If you want you can report a fail immediately if Duplicacy reports an error, like this:

CD /D X:\YourRepositoryFolder

"C:\Program Files\Duplicacy\duplicacy.exe" backup

If not Errorlevel 1 (

REM Exit code is not 1 or higher, we're good!

powershell.exe -command "&{Invoke-RestMethod 'https://hc-ping.com/your-check-url'}"

) Else (

REM If we got here something is wrong, send the fail signal

powershell.exe -command "&{Invoke-RestMethod 'https://hc-ping.com/your-check-url/fail'}"

)

If your script performs more tasks, for instance a local backup first and then a copy to an external destination, you should only send a signal to the monitoring service when all operations have succeded.

If you want to send more info, or customize the User-Agent string (since it is shown in the halthchecks.io logs) you could do it like this:

powershell.exe -command "&{Invoke-RestMethod 'https://hc-ping.com/your-check-url' -headers @{'User-Agent'='%Computername%'} -body 'Documents OK' -method POST}"

This way it is possible to collect results from Duplicacy output to put in Healthchecks.io log.

(you might want to be careful with what details you publish this way)

This works in Windows 7 and use powershell version 2:

powershell.exe -command "&{(New-Object System.Net.WebClient).DownloadString('https://hc-ping.com/your-check-url')}"

Customizing User-Agent and body with this is a little more complex

)

)